Category: Mixed Reality

Tangible Computing

My first experience with Tangible Computing came in Ken Perlin’s Physical Media class at NYU. We built a cool tabletop game called Krakatoa which was a resource management game that used these “pucks” which were marked with 1,2,3,or 4 dots. If I remember correctly, each of the four players would slide their puck over to a command menu, then slide the puck to a place on the board and their character would go do whatever the command was. I think it was things like “cut wood” or “steal wood” or “burn wood” as you can guess wood was the primary resource for this game. I was still a beginning programmer at the time so I didn’t tackle any of the heavy stuff, but I did do all the audio recording and management and I made the pucks which were painted clay. They felt really nice in your hand when you slid them. Nowadays I would probably use a laser cutter to make them, but back then clay was best and I think it might still be the best.

Working in AR is very much like working on Tabletop Computing, but there is something about the mediation of a device that makes it less engaging. It also has a lot in common with physical computing but the fact that inert objects are used instead of interactive ones also sort of changes things as well. So, when I got to Georgetown I was pretty excited that one of my colleagues knew about one of the old Surface (now pixelsense) tables that I could have. These are the big table kind, not the Sur40, and they were/are way better in a lot of ways. I remember Jeff Han working on the original design for these, again back at NYU, right before he gave his TED talk showing it off (that was my laptop he used for that talk btw–closest I’ll probably ever get to the TED stage). Anyway, I decided to run a little experiment by teaching courses in both Tangible and Physical Computing in the Fall and Spring of my second year to see which one worked better. Turns out physical computing was way better, and that class morphed into my Interaction Design class. I still use the Surface on occasion. I had a student use it ion the Pilgrimage Project and I made an app for the CCT lounge that introduces visitors to our faculty and staff.

The one major finding from that study was how totally useless the written tutorials were in both of those scenarios. Beyond useless actually, dangerous. It’s not that they were bad, they were great. The arduino tutorial on the their website are succinct and simple, and the ones that we made for the Tangibles class were terrific, all the students said so. The problem was that students had basically zero retention from the tutorials, were totally unable to connect them together or to see patterns, and they gave the false impression that the students could do things they simply were not sophisticated enough to do. Given how many tutorials and how much people rely on them in online courses and lots of other venues I suspect this is a going to be a real problem for online courses to overcome in the future.

Multiscale MR and GTtour

I first discovered the notion of multiscale methods in one of NECSI’s Complex Systems summer courses as a grad student. Not surprisingly they were extremely “mathy” which is great, but I got to thinking about what the qualitative properties of these methods might be. Then about a year or so later Mary Hegarty gave a talk at Georgia Tech about her work in spatial scale and, although these are slightly different notions of scale, I began to see a path forward. One prominent stop on that path was my PhD dissertation work on spatial scale in Mixed Reality. The connection is a simple one, if we experience space at different scales, and we use partially overlapping cognitive abilities at those different scales, then how do these play out in the interactive environments of Mixed Reality?

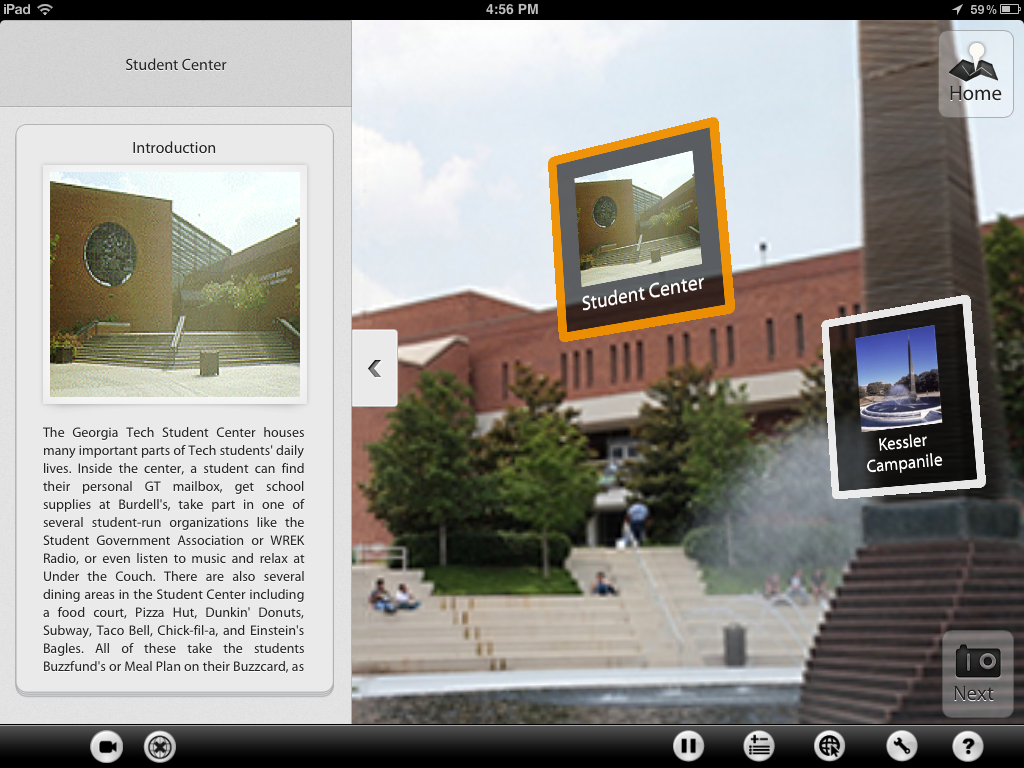

One of the major breakthroughs I had was in developing the notion of scale transitions. Multiscale MR experiences need some clear way to move the user through information at different spatial scales. There are emerging techniques and best practices for doing this, just like in the early days of filmmaking, and these need to be catalogued, expanded upon, and critiqued, just like cuts in film. My dissertation explored these ideas in the context of GTtour a MR tablet experience that let users take a virtual tour of the Georgia Tech campus. The good folks at RNOC helped me put together the tour. I’m not sure if it’s still in use but nonetheless it was really my first foray into mulitscale methods.

VR Courtroom

I’ve been working with a wonderfully talented CS post-bacc student and Tanina Rostain from Georgetown’s Law School to develop a VR courtroom simulator for training law students. I never realized, but it makes perfect sense, that it is really difficult for law students to get any experience in an actual courtroom. I can’t imagine the bureaucratic hurdles to doing that and I’m sure it’s very expensive to build a replica (although the production designer in me thinks it would be a fun thing to try).

We’re adapting a web-based card game that they made some time ago to work as a complete VR simulation of the witness statement and cross-examination parts of legal proceedings. We’re looking at whether this kind of immersive simulation can help law students overcome some of the anxiety associated with being in court for the first time. From there the sky is the limit. I can imagine entire courtroom dramas played out in interact VR, although spending the entire length of a trial with a VR headset on seems like it would incapacitate you for at least three days afterward.

Interactive Architecture

It will never cease to amaze me how much press you can get just by projecting something on the side of a building. Here is some video from the Public Space Invaders project from 2006 that I did with Kuan Huang.

Design Pre-Patterns in AR

yan xu and i worked so hard on this movie. i remember doing at least 20 takes of the voiceover in our sound booth and rewriting the script over and over to get the time down. she was a really demanding director. it was fun though. i think it was probably the most effort anyone has ever put into the 5 minute video that accompanies their research paper. i’m still convinced that we created a new genre of video: futurist indoctrination. I say that because it reminds me of those 50’s era school propaganda videos that the hipsters all love ironically, but the unrelenting pace of the voice over and the soundtrack make it seem like its aimed at ultra tech savvy school kids from the 2050’s instead of the 1950’s.

D5 Creation

D5 Creation